Blog

High-Density GPU Colocation: The Enterprise Guide to AI Infrastructure in 2026

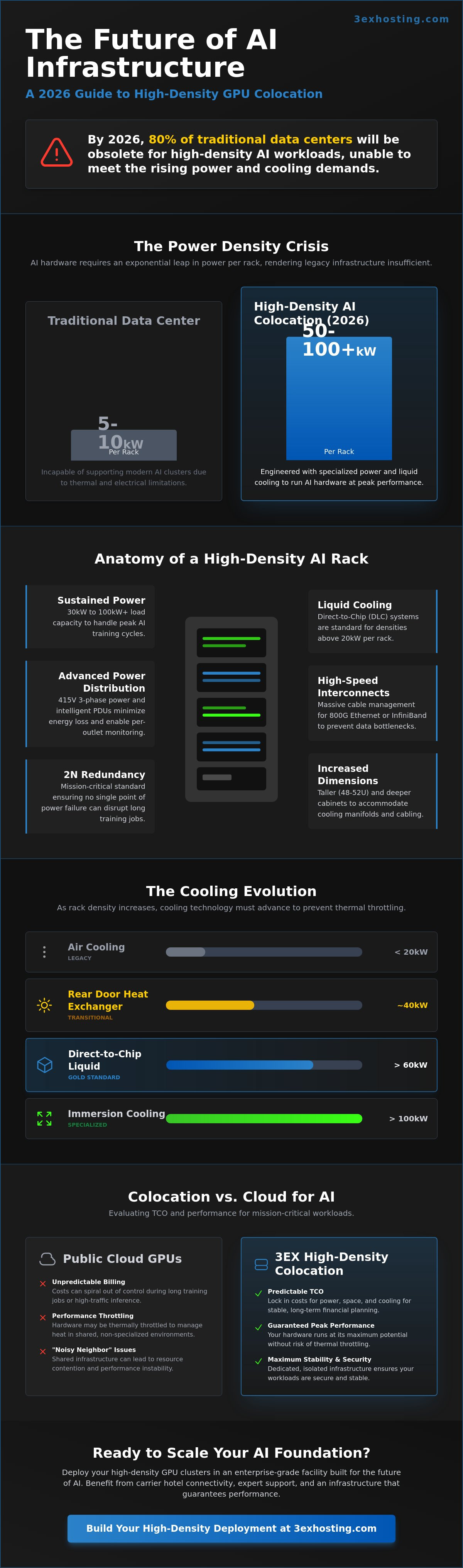

By 2026, industry reports suggest that AI power requirements will push rack densities past 50kW, rendering 80 percent of traditional enterprise data centers incapable of supporting high density GPU colocation. You’ve likely seen your AI performance suffer from thermal throttling or watched your budget disappear into the black hole of unpredictable cloud billing. It’s a common struggle for teams trying to balance cutting-edge innovation with the physical limits of legacy infrastructure. You deserve a stable, professional environment where your hardware can actually perform at its peak without constant oversight.

We’ll show you exactly how to scale your machine learning operations without the fear of downtime or cooling failures. This guide provides a technical roadmap to transition your workloads efficiently, helping you lower your total cost of ownership while maintaining super-fast processing speeds. You’ll learn how to evaluate power specs and liquid cooling architectures to build a reliable foundation for the next generation of AI development. We’re moving past the marketing hype to focus on the technical stability your enterprise requires.

Key Takeaways

- Learn to define 2026 AI requirements by calculating actual power draws and redundancy needs for racks exceeding 20kW.

- Evaluate the long-term TCO and performance advantages of high density GPU colocation compared to standard cloud environments.

- Master the structural and network prerequisites, from floor load capacities to 800G Ethernet architecture, for a successful deployment.

- Discover how to eliminate “noisy neighbor” issues and ensure maximum stability for your machine learning workloads.

- Explore how 3EX Hosting’s enterprise-grade infrastructure and carrier hotel connectivity accelerate your AI scaling strategy.

What is High-Density GPU Colocation?

High density GPU colocation isn’t just a minor upgrade to server rooms. It’s a fundamental shift in how enterprises approach physical infrastructure. By 2026, the industry standard for “high density” has moved past the 20kW per rack threshold. Standard enterprise facilities, which historically averaged 5kW to 10kW per cabinet, can’t sustain the thermal or electrical demands of modern AI clusters. A modern data center designed for 2026 must provide specialized cooling and power delivery systems to support NVIDIA H100 and B200 Blackwell chips. This transition forces a strategic pivot. Companies don’t just lease space by the square foot anymore; they secure it based on power density and cooling capacity.

The evolution from standard colocation to specialized environments is driven by the sheer energy consumption of AI hardware. In 2024, a single rack of H100 servers could pull 40kW. By 2026, multi-node clusters frequently push that requirement toward 100kW. Standard footprints fail because they lack the floor loading capacity for heavy liquid-cooled racks and the airflow management required to prevent immediate hardware failure. Modern infrastructure planning now focuses on “power-first” logic, where the electrical backbone dictates the entire deployment strategy.

The Anatomy of a High-Density Rack

A high-density rack is a complex ecosystem of power and performance. In 2026, these cabinets are built to handle 30kW to 100kW+ of sustained load. They feature specialized PDUs capable of high-voltage distribution to minimize energy loss. The physical dimensions have also changed. Racks are often 48U to 52U high and significantly deeper than traditional units. This extra space accommodates liquid cooling manifolds and massive cable management for InfiniBand interconnects. Every component is optimized for speed. This includes the integration of superfast NVMe storage arrays directly adjacent to the GPU nodes to prevent data bottlenecks during intensive compute cycles.

AI Training vs. Inference Infrastructure

Determining your infrastructure profile depends on whether you’re building or deploying models. Training requires massive, sustained power. These environments prioritize ultra-low latency interconnects and liquid-to-chip cooling to prevent hardware degradation. Inference workloads are different. They often require edge-focused density and high availability rather than raw, continuous power draw. For large-scale deployments, cabinet colocation provides the necessary isolation and power redundancy. Identifying your phase is critical. Training clusters need maximum throughput, while inference nodes need stable, redundant paths to reach end-users without delay. If your project involves real-time data processing, your infrastructure must support bursty power profiles without sacrificing stability.

The Power and Thermal Equation for AI Workloads

AI infrastructure demands more than what’s listed on a hardware sticker. Nameplate ratings are often conservative estimates that don’t reflect the reality of high-performance computing. In practice, a cluster of NVIDIA H100 or Blackwell GPUs can pull 15% to 20% more power during peak training cycles than their steady-state averages suggest. Facilities must account for these micro-spikes to avoid tripping breakers. High density GPU colocation requires a shift from traditional capacity planning to dynamic load modeling. Redundancy also changes at scale. While N+1 might suffice for standard web servers, 2N redundancy is the 2026 standard for mission-critical AI to ensure that a single power path failure doesn’t derail a month-long training job.

Power Usage Effectiveness (PUE) represents the ratio of total power entering the data center to the power used by the GPU hardware, serving as the primary metric for high-density GPU efficiency.

Advanced Cooling: Air, Liquid, and Hybrid

Air cooling hits a physical wall at approximately 20kW per rack. To scale beyond this, Rear Door Heat Exchangers (RDHx) act as a transition technology, removing up to 80% of server heat before it enters the room. For 2026 deployments, Direct-to-Chip Liquid Cooling (DLC) is the gold standard. It brings coolant directly to the cold plate of the GPU, allowing for densities exceeding 60kW per rack. Immersion cooling remains a specialized choice. It’s best suited for 100kW+ environments where hardware is fully submerged in dielectric fluid. These power and cooling challenges require infrastructure that can handle up to 43kW per rack according to Intel IT best practices.

Power Distribution and Redundancy

Standard 120V or 208V power isn’t efficient for AI. 415V power distribution is now a necessity. It reduces amperage and minimizes heat loss within the cabling. You’ll need intelligent PDUs to monitor per-outlet consumption. This data helps balance AI workloads across the cluster to prevent hot spots. Sudden GPU power spikes can overwhelm traditional UPS systems. Modern facilities use lithium-ion battery backups that respond in milliseconds to stabilize the load. If you’re planning a deployment, checking a provider’s cabinet colocation capabilities for 415V support is a critical first step.

Managing these loads requires a partner who understands that stability isn’t just about uptime, it’s about thermal consistency. When your GPUs run at 100% utilization, even a minor cooling fluctuation can trigger thermal throttling, which wastes expensive compute time. Secure, stable environments are the only way to protect your hardware investment and maintain the superfast processing speeds your AI models require.

GPU Cloud vs. High-Density Colocation: A 2026 Comparison

Enterprises in 2026 face a critical infrastructure fork in the road. While the public cloud offers instant scalability, the financial and performance penalties for sustained AI workloads have become impossible to ignore. Choosing high density GPU colocation over a purely cloud-based strategy is no longer just a technical preference; it’s a fiscal necessity for organizations running large-scale training models. Ownership provides a level of predictability that virtualized environments simply cannot replicate. For a comprehensive breakdown of all available options, our AI GPU hosting comparison of cloud, dedicated, and colocation examines the performance, cost, and reliability tradeoffs in detail.

Predicting and Controlling Infrastructure Costs

Cloud pricing models are often deceptive. While the entry price for a GPU instance looks manageable, hidden costs like data egress fees and premium storage tiers quickly inflate the monthly bill. In a three-year hardware lifecycle, the total cost of ownership for dedicated hardware in a colocation facility is often 35% to 50% lower than equivalent cloud instances. You trade the variable, often volatile OPEX of the cloud for a stable, predictable cost structure. This allows your finance team to forecast budgets without fearing a sudden spike in API usage or data transfer volumes. Before committing to a strategy, it’s vital to evaluate your cabinet needs to understand the power-to-space ratio required for your specific hardware.

Latency and Data Throughput

The movement toward high-density computing has shifted the focus from raw processing power to data ingestion speeds. In a colocation environment, you utilize physical cross-connects that provide dedicated 100Gbps or 400Gbps links directly to your storage arrays. This eliminates the “noisy neighbor” effect where other tenants on a shared cloud network can throttle your I/O performance. Physical proximity to carrier hotels ensures that distributed AI applications maintain sub-millisecond latency. For a deeper look at how these architectures compare, see our AI Cloud Computing Hosting Guide.

Data sovereignty and security remain the strongest arguments for physical control. When you own the hardware, you control the entire security stack, from the BIOS to the physical disk encryption. For industries like healthcare or defense, where AI models train on highly sensitive datasets, the risk of a multi-tenant cloud environment is often too high. Organizations that require complete infrastructure autonomy should explore enterprise private suites designed for colocation sovereignty, which provide dedicated power paths and bespoke cooling configurations that shared environments simply cannot offer. High density GPU colocation allows you to maintain strict compliance while still accessing the massive power densities required for modern H100 or B200 clusters.

The most successful enterprises in 2026 use a hybrid approach. They place their baseline training workloads in a colocation facility to maximize ROI and performance. They only turn to the cloud for temporary burst capacity or initial testing. This strategy ensures that the bulk of your AI investment builds equity in your own infrastructure rather than disappearing into a cloud provider’s quarterly earnings report. To see how this plays out in practice, explore our enterprise case study on high-performance GPU cloud hosting and the real-world scaling strategies that eliminate throttling and unpredictable costs.

Actionable Guidance: Preparing for GPU Deployment

Successful high density GPU colocation requires a shift from traditional server management to industrial-grade infrastructure planning. You can’t treat a rack of NVIDIA H100s like a standard web server cluster. The power draw and heat dissipation are only half the battle; the physical and logical preparation must be flawless to avoid expensive downtime. A structural audit is the first non-negotiable step. You must verify that the facility floor can handle concentrated loads, as modern AI clusters often concentrate massive weight in a small footprint. The burn-in period consists of running intensive diagnostic stress tests on new GPU hardware for 48 to 72 hours to identify infant mortality failures before the system enters production.

Network architecture needs to support the massive east-west traffic common in machine learning workloads. This means designing for InfiniBand or 400G/800G Ethernet to prevent bottlenecks during model training. Security protocols must also scale with the value of the assets. We implement multi-factor biometric access and dedicated cage-level surveillance for every high-density deployment. These layers ensure that only authorized personnel interact with your hardware, providing a recorded audit trail for compliance and peace of mind.

The Physical Logistics of High-Density Racks

Weight management is a critical safety concern in 2026. A fully loaded GPU rack can easily exceed 3,000 lbs, which is double the capacity of many older raised-floor environments. You need reinforced flooring or solid slab designs to prevent structural failure. Cable management also becomes a complex puzzle. AI interconnects require hundreds of high-speed fiber or Direct Attach Copper (DAC) cables that must be routed without blocking critical airflow. Proper organization prevents “cable dams” that lead to localized hotspots and hardware throttling. Learn about custom cage solutions designed to handle these specific physical requirements.

Operational Continuity and Remote Management

You can’t rely on local staff for specialized GPU troubleshooting. 24/7 remote hands are vital because a hung GPU node can stall an entire training job, costing thousands of dollars in wasted compute time every hour. Proactive monitoring is the only way to stay ahead of hardware fatigue. We set aggressive threshold alerts for both temperature and power draw at the PDU level. If a rack exceeds its 35kW or 50kW limit, our systems flag it instantly. For more insights on where this technology is heading, read about The Future of GPU Server Hosting.

Ready to scale your AI infrastructure with a partner who understands the technical demands of 2026? Request a custom high-density configuration quote today.

Scaling Your AI Foundation with 3EX Hosting

3EX Hosting builds the bridge between raw AI potential and operational reality. We’ve engineered our facilities to handle the rigorous power and cooling demands of high density GPU colocation without compromise. Our infrastructure supports rack densities exceeding 30kW per cabinet, allowing enterprises to deploy H100 or B200 clusters in optimized configurations. We focus on technical stability so your engineering team can focus on model accuracy.

Speed defines AI performance. Our carrier hotel connectivity ensures your models communicate with sub-millisecond latency across global backbones. By placing your hardware at the heart of the network, you eliminate the bottlenecks that often plague remote or secondary data centers. It’s about the superfast throughput required for real-time inference and massive dataset synchronization during distributed training cycles.

Deployment shouldn’t be a hurdle for your growth. We offer comprehensive move-in assistance to manage the complex logistics of high-value GPU hardware. Once your gear is racked, our 24/7 expert remote hands act as a direct extension of your internal IT team. Whether you need a component swap or a physical port verification, our technicians respond in minutes to maintain your uptime.

Enterprise-Grade Security and Compliance

AI training often involves proprietary datasets or sensitive PII that requires maximum protection. Our facilities maintain strict SOC2 and HIPAA compliance standards to ensure your data stays protected at rest and in transit. Physical security measures include multi-factor authentication, biometric access points, and continuous 24/7 video surveillance. You can explore our data center features to see how we harden our environment against unauthorized access and environmental threats.

Ready to Scale Your AI Infrastructure?

We don’t believe in one-size-fits-all solutions for high density GPU colocation. Our process begins with a technical consultation where we map your specific kW requirements to our cabinet or cage solutions. We perform a detailed site audit and develop a deployment plan tailored to your specific GPU architecture. If your project requires complete physical isolation, we design private data center suites built to your exact power and cooling specifications. For enterprises that need full operational autonomy with dedicated power paths and compliance-ready environments, our enterprise private suites for colocation sovereignty provide the highest level of infrastructure control available in 2026. Get a custom quote for your GPU colocation needs today to secure your 2026 AI roadmap.

Build a Scalable Foundation for Your AI Ambitions

Success in 2026 depends on infrastructure that scales as fast as your models. Enterprise GPU clusters now require power densities exceeding 50kW per rack, a benchmark that traditional facilities simply can’t meet. By moving to high density GPU colocation, you gain the technical stability needed for sustained compute performance without the overhead of building your own tier-4 data center. Our infrastructure provides carrier hotel connectivity for maximum throughput and 24/7 Remote Hands support for mission-critical hardware. You’ll have access to customizable high-density cabinet and cage solutions designed specifically for the thermal demands of next-generation silicon. It’s time to stop worrying about power limits and focus on your next breakthrough. We’re here to ensure your hardware stays cool, connected, and superfast. Let’s get your deployment ready for the future today. Your data deserves a home that’s as ambitious as your vision.

Request a High-Density GPU Colocation Quote

Frequently Asked Questions

What is the minimum power density required for GPU colocation?

High density GPU colocation requires a minimum power density of 20kW per rack to support modern enterprise hardware. While traditional setups operate at 5kW, AI-driven workloads in 2026 demand significantly more power. Facilities must provide 30kW to 50kW per rack to ensure stable performance for H100 clusters. This ensures your hardware runs at peak capacity without power-related throttling or unexpected shutdowns.

Can standard air cooling handle a rack full of NVIDIA H100s?

Standard air cooling cannot efficiently manage a rack fully populated with NVIDIA H100 GPUs. An H100 SXM5 module has a TDP of 700W, and a full rack of 8 nodes can exceed 40kW of heat. Traditional CRAC units struggle to dissipate this intense thermal load. High-density facilities use Rear Door Heat Exchangers or Containment Systems to maintain safe operating temperatures for your equipment.

What is floor loading and why does it matter for GPU colocation?

Floor loading is the maximum weight a data center floor can support per square foot. It’s critical because a fully loaded GPU rack can weigh over 3,000 pounds. Most standard data centers only support 250 pounds per square foot. High-density facilities must support 500 pounds per square foot to prevent structural failure or tile damage. This weight capacity is a non-negotiable safety requirement for AI infrastructure.

How does high-density colocation improve AI training times?

High-density setups improve AI training times by enabling shorter cable runs and faster interconnects between nodes. Using InfiniBand at 400Gbps or 800Gbps reduces data transfer latency between servers. This architectural proximity ensures GPUs spend more time processing and less time waiting for data. Training a Large Language Model can be 25% faster when nodes are physically consolidated in a professional high-density environment.

Is liquid cooling mandatory for all high-density GPU deployments?

Liquid cooling isn’t mandatory for all deployments, but it’s essential for densities exceeding 30kW per rack. Air cooling reaches its physical limits as heat production increases. Direct-to-Chip or Immersion Cooling provides 1,000 times the heat-carrying capacity of air. Facilities built after 2024 often integrate liquid loops to support 100kW rack configurations. It’s the most reliable way to prevent thermal throttling during intense workloads.

What are remote hands and why are they vital for GPU hosting?

Remote hands are on-site technical experts who perform physical tasks on your hardware. They’re vital for GPU cloud hosting because high-end servers require specialized handling for component swaps or cabling. Having 24/7 access to experts means you don’t need to fly your own engineers to the site for a drive replacement or a hard reboot. This ensures 99.999% uptime for your most critical AI projects.

How do cross-connects impact AI performance?

Cross-connects impact AI performance by providing direct, low-latency links between your clusters and storage providers. These physical fiber connections eliminate the lag associated with the public internet. By utilizing 100Gbps cross-connects, you achieve sub-millisecond latency. This is crucial for real-time inference and distributed training across multiple high density GPU colocation zones. It ensures your superfast NVMe storage stays synchronized with your processing power.

What is the difference between high-density and standard colocation?

The primary difference lies in the infrastructure’s ability to handle extreme power and heat. Standard colocation supports 5kW to 10kW per rack. High-density colocation starts at 20kW and scales to 100kW or more. It also features reinforced flooring and advanced cooling systems like Rear Door Heat Exchangers. Standard sites simply don’t have the electrical or thermal headroom for modern AI hardware produced in 2026. To understand how these options stack up against cloud and dedicated server alternatives, review our detailed AI GPU hosting comparison guide for a full analysis of cost, performance, and scalability tradeoffs.

SUPPORT

SUPPORT

3EX United States

3EX United States