Blog

High-Density Colocation Providers: The 2026 Enterprise Buying Guide

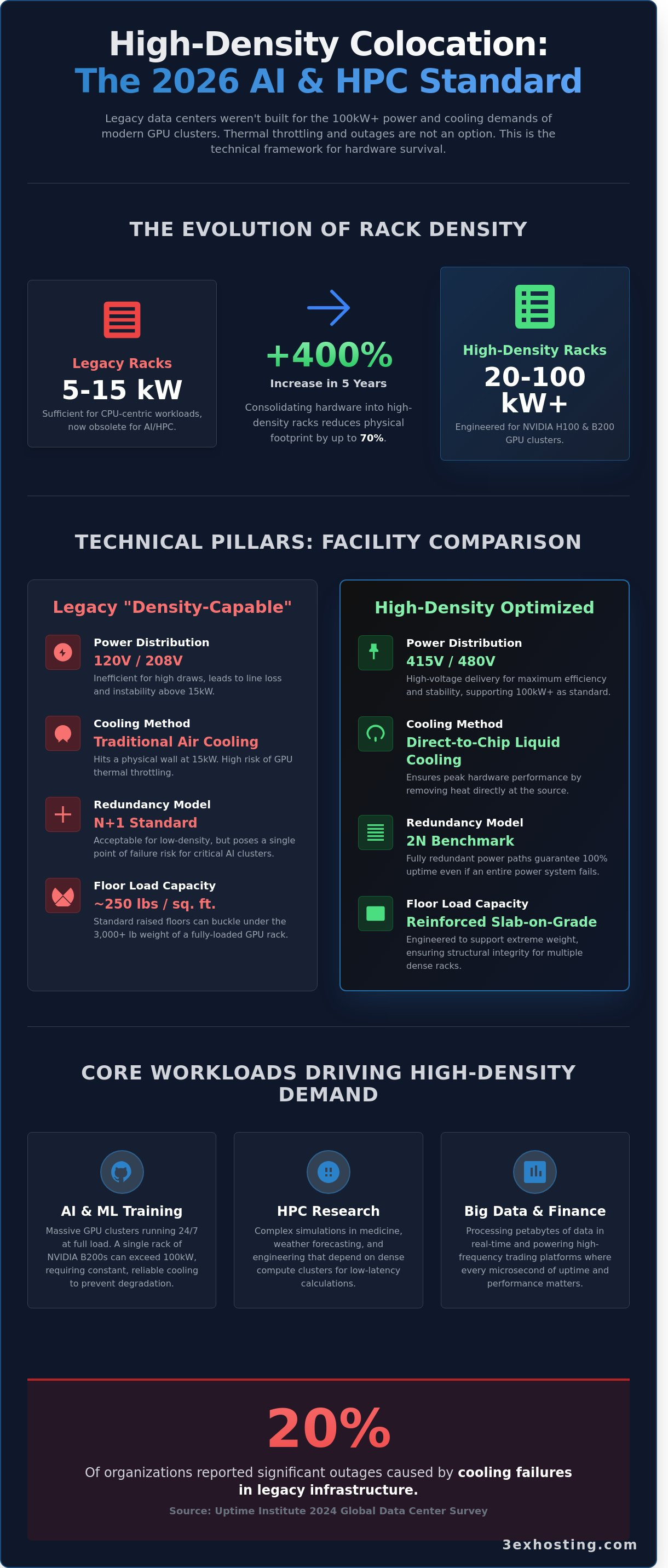

How many rack-level thermal shutdowns will your AI deployment tolerate before the project ROI vanishes? According to Uptime Institute’s 2024 Global Data Center Survey, 20% of organizations reported significant outages caused by cooling failures in legacy infrastructure. As enterprise demand for Blackwell and H100 clusters surges, selecting the right high-density colocation providers becomes a matter of hardware survival rather than just leasing space and power.

You probably know that standard data centers weren’t built to handle 50kW racks. You’re likely tired of seeing GPU performance dip due to thermal throttling or worrying if your current provider can actually deliver the redundant power they promised on paper. This guide provides the technical framework to evaluate providers capable of sustaining 100% uptime for liquid-cooled AI and HPC workloads. You’ll learn to verify cooling efficiency and specialized on-site support protocols that protect your high-value hardware. We’ll break down the 2026 standards for power density, PUE metrics, and the critical infrastructure requirements for seamless GPU integration.

Key Takeaways

- Learn to identify facilities capable of supporting 20kW to 100kW+ per rack to meet the extreme power demands of NVIDIA H100 and B200 GPU clusters.

- Establish a rigorous technical framework for evaluating high-density colocation providers based on power distribution, floor load capacity, and advanced cooling methodologies.

- Discover why legacy “raised floor” environments pose a risk to AI workloads and how Direct-to-Chip cooling ensures maximum hardware performance.

- Access a comprehensive migration checklist to audit hardware kVA requirements and ensure network pathing can sustain data-heavy HPC traffic.

- Understand the strategic advantages of choosing a carrier-neutral, purpose-built facility designed for the stability and scalability of next-generation enterprise computing.

What Defines High-Density Colocation Providers in 2026?

In 2026, high-density colocation isn’t a niche requirement; it’s the baseline for enterprise AI and large-scale data processing. While 5kW per rack sufficed for years, modern high-density colocation providers now engineer environments to support 20kW to 100kW+ per cabinet. This shift stems from the transition from CPU-centric processing to GPU-heavy architectures. Hardware like the NVIDIA H100 and the newer B200 Blackwell chips demand power and cooling levels that traditional infrastructure cannot provide. A standard data center built in 2015 often struggles above 15kW. Modern facilities utilize liquid cooling and rear-door heat exchangers (RDHx) to maintain technical stability.

Distinguishing between “density-capable” and “density-optimized” facilities is vital for 2026 planning. A density-capable provider might use temporary cooling units or “hot aisle” containment to manage a single 20kW rack. In contrast, a density-optimized provider builds the entire facility around liquid cooling loops, high-voltage power distribution, and reinforced flooring. These optimized sites handle 100kW racks as a standard operation, not an exception. For enterprises, this means your infrastructure stays cool and performs at peak speeds without the risk of thermal throttling.

The Evolution of Rack Density

Rack density has increased by 400% in the last five years. Legacy 5kW racks are becoming obsolete for anything beyond basic web hosting. Traditional air cooling hits a physical wall at the 15kW threshold because the air cannot move fast enough to remove the heat. By consolidating hardware into high-density racks, enterprises reduce their physical footprint by up to 70%. This consolidation lowers cross-connect costs and simplifies cable management. It’s a more efficient way to scale. Using specialized cabinet colocation ensures your hardware has the power overhead needed for future expansion.

Core Workloads Driving High-Density Demand

Three main workloads push these limits in 2026. First, Generative AI training clusters require massive throughput. A single rack of NVIDIA B200 units can exceed 100kW. Second, High-Performance Computing (HPC) used in scientific research depends on dense clusters for low-latency calculations. Third, financial institutions use high-density setups for high-frequency trading platforms where every microsecond matters. These workloads require the stability that only optimized providers offer. You don’t want to risk hardware degradation because of insufficient cooling. Modern providers focus on these three pillars:

- AI and ML Training: Massive GPU clusters that run 24/7 at full load.

- HPC Research: Complex simulations for weather, medicine, and engineering.

- Big Data Analytics: Processing petabytes of information in real-time.

Choosing the right partner means looking beyond the square footage. It’s about the power density and the cooling technology that keeps your systems running at lightning-fast speeds. Reliability isn’t just a promise; it’s a result of superior engineering.

The Technical Pillars: Power, Cooling, and Floor Load

High-density colocation providers are no longer just selling floor space. They’re selling the specialized engineering required to support 35kW to 100kW per cabinet. Standard data centers often cap out at 10kW per rack, which is insufficient for the 2026 enterprise landscape dominated by AI and machine learning. To maintain stability, facilities must transition from traditional 120V/208V power to 415V or 480V distributions. This reduces line loss and allows for the massive current draws required by GPU clusters.

Redundancy models have also evolved. While N+1 (one extra component for every group of active units) works for standard workloads, 2N redundancy is the benchmark for high-density environments. This ensures that even if an entire power path fails, the 50kW load doesn’t crash. Managing these extreme draws requires a deep understanding of Energy-Efficient Data Center Design to prevent localized hot spots from triggering cascading failures.

Floor weight capacity is a frequently overlooked constraint. A single rack of Nvidia B200 Blackwell chips can weigh over 3,000 pounds. Standard raised floors rated for 250 pounds per square foot will buckle under this pressure. Modern high-density sites utilize reinforced slab-on-grade flooring or heavy-duty structural steel frames capable of supporting 500 to 600 pounds per square foot to ensure physical equipment safety.

Advanced Thermal Management

Air cooling reaches its physical limit around 20kW to 25kW per rack. To go higher, high-density colocation providers utilize Rear Door Heat Exchangers (RDHx) or Direct-to-Chip cooling. Direct-to-chip systems circulate coolant through plates sitting directly on the processors, removing heat 20 times more effectively than air. Immersion cooling, where servers are submerged in dielectric fluid, represents the 2026 gold standard for 100kW+ racks, though it requires specialized chassis. Hot and cold aisle containment remains essential to prevent air mixing and keep PUE low. In 2026, Power Usage Effectiveness (PUE) in high-density environments is defined as the ratio of total facility power to IT power, where liquid-cooled facilities aim for a metric below 1.15 to prove operational efficiency.

Power Redundancy and Distribution

Traditional underfloor “whip” delivery is being replaced by overhead busway distribution. Busways allow for rapid scaling and better airflow by removing cable clutter from the floor plenum. You’ll need intelligent PDUs (Power Distribution Units) at the rack level to monitor real-time consumption and prevent circuit overloads. These PDUs manage the “surge” requirements of high-performance hardware, which can spike significantly during large model training cycles. If you’re looking for a facility that handles these technical complexities, you might want to explore specialized cabinet colocation options that offer these modern power configurations.

Evaluating Providers: Legacy vs. High-Density Optimized

Enterprises in 2026 can’t afford to misjudge infrastructure. Legacy facilities designed for 5kW racks fail when faced with 50kW AI clusters. You need a framework that separates marketing claims from technical reality. Most leading high-density colocation providers have transitioned to slab-on-grade flooring. Raised floors often collapse under the weight of liquid-cooled manifolds or high-density server racks that exceed 3,000 pounds. Airflow management in legacy builds is another failure point. High-density gear creates hot spots that traditional CRAC units can’t mitigate. This leads to thermal throttling and hardware degradation.

- Floor Load Capacity: Legacy raised floors support 250 lbs/sq ft, while modern high-density slabs support 3,000+ lbs/sq ft.

- Cooling Redundancy: Look for N+2 configurations that support rear-door heat exchangers or direct-to-chip liquid cooling.

- Power Density: Ensure the facility can deliver 30kW to 100kW per rack without localized power sags.

Choosing a provider based on legacy standards is a risk to your hardware’s lifespan. Modern providers prioritize technical stability and provide the cooling headroom necessary for GPU-intensive workloads. 3ex Hosting focuses on these stable, high-performance environments where your equipment stays cool and operational 24/7.

Connectivity and Interconnection

Low latency is the lifeblood of AI. The impact of cross-connect services on multi-cloud AI strategies is measurable. A 1-millisecond delay in data egress can throttle model training by 15% over a 24-hour cycle. Look for carrier-neutral facilities with high carrier density. Carrier Hotels are essential because they provide direct access to subsea cables and major IXPs. This proximity reduces hop counts. 3ex Hosting ensures your data moves through superfast, optimized paths without unnecessary bottlenecks. Direct peering with major cloud on-ramps is mandatory for hybrid AI deployments.

Security and Compliance for Mission-Critical Data

Physical security must match the digital value of your LLMs. Standard cages aren’t enough for 2026. Top-tier high-density colocation providers utilize three-factor authentication including biometrics and tailgating sensors. Compliance isn’t optional. Your provider must maintain SOC 2 Type II, HIPAA, and PCI-DSS certifications to meet 2026 regulatory demands. For the highest level of isolation, enterprises choose private data center suites. These suites offer dedicated cooling loops and power distribution. They eliminate the “noisy neighbor” effect both in terms of thermals and network congestion. It’s a professional, stable foundation for your most sensitive workloads.

The High-Density Migration Checklist

Moving your infrastructure to a high-density environment requires more than just a truck and a lift. High-density colocation providers expect precision in your power and weight specifications. A typical 2026 enterprise deployment involves GPU-heavy clusters that draw between 20kW and 50kW per rack. If your hardware audit misses the mark by even 5%, you risk tripped breakers or thermal throttling. Start with a detailed inventory that lists the peak kVA for every chassis. You should add a 20% buffer for future growth to avoid costly mid-contract re-cabling or power upgrades.

Hardware and Power Planning

Your power strategy dictates your uptime. Selecting the right full cabinet colocation configuration is the first step. High-density racks often require three-phase power and specialized PDUs capable of handling 60A or 80A circuits. Don’t overlook floor loading limits. A fully populated 42U rack of modern AI servers can weigh over 3,000 pounds. Verify that the facility’s raised floors or slab can support this concentrated weight before the hardware arrives on the loading dock. Compatibility with high-amperage pin-and-sleeve connectors is also a requirement for most 415V deployments.

Network pathing is the next hurdle. High-density workloads often involve massive datasets that require 100GbE or 400GbE uplinks to prevent bottlenecks. You need diverse fiber paths to ensure that a single cable failure doesn’t isolate a 30kW cluster. Integrating disaster recovery is equally critical. In high-density setups, the sheer volume of data makes traditional backup windows impossible. You’ll need synchronous replication or snapshot-based recovery that functions at the hardware level to ensure business continuity.

The Remote Hands Advantage

In a high-density environment, things happen fast. High heat output means that a cooling failure can lead to equipment damage in minutes. This makes 24/7 on-site support a requirement, not a luxury. Our guide on Remote Hands Support explains how on-site technicians improve operational efficiency by acting as your eyes and ears on the ground.

You must distinguish between basic Remote Hands and Smart Hands. Basic support covers power cycles and cable swaps. Smart Hands involves complex troubleshooting, OS configurations, and hardware diagnostics. For high-density colocation providers, having a team that understands liquid cooling loops or InfiniBand fabrics is essential for maintaining 99.999% uptime. Professional on-site staff can identify a failing fan or a leak before it triggers a system-wide shutdown.

Ready to secure your infrastructure in a facility built for high-performance workloads? Explore our high-density cabinet options today.

Why 3EX Hosting is the Enterprise Choice for High-Density

3EX Hosting operates at the intersection of power and precision. Our facility isn’t a repurposed office building. It’s a purpose-built environment designed specifically for the rigorous demands of 2026 enterprise workloads. We rank among the leading high-density colocation providers because we solve the thermal and power distribution challenges that stall standard data centers. Our carrier-neutral status transforms our facility into a strategic carrier hotel. This gives you direct, low-latency cross-connects to over 100 global Tier-1 providers, ensuring your data moves as fast as your hardware allows.

Reliability is our baseline, not a goal. We maintain a 100% uptime record through N+2 redundancy on all critical power and cooling systems. Every component, from the diesel generators to the cooling chillers, undergoes monthly load testing to ensure zero failure points. This technical excellence provides the stability required for mission-critical applications that can’t afford a single second of downtime.

Scale and Customization

Enterprises don’t fit into a single template. We offer modular growth paths, ranging from secure cage colocation to fully segregated private enterprise suites. If your AI cluster requires rack densities exceeding 35kW, we build the specific cooling and power delivery to match those needs. We integrate these custom setups with robust disaster recovery solutions to protect your operations against regional outages. This flexibility allows 88% of our clients to scale their footprint within the same facility as their compute needs grow.

The 3EX Technical Partnership

You won’t deal with entry-level support tickets or automated responses here. Our clients have direct access to senior infrastructure engineers who understand the nuances of high-density power delivery and liquid cooling integration. We prioritize technical transparency. Our pricing models are based on actual power consumption, not inflated estimates. This level of clarity helps IT directors optimize their budgets with 100% accuracy. As high-density colocation providers, we act as an extension of your internal team, managing the physical layer so you can focus on software innovation. It’s time to move your infrastructure to a facility that’s built for the future of enterprise compute.

Future-Proofing Your 2026 Infrastructure Strategy

Industry projections for 2026 indicate that enterprise rack densities will frequently surpass 30kW to support AI and high-performance computing. Legacy data centers built before the current hardware revolution often fail to meet these rigorous cooling and power requirements. Selecting the right high-density colocation providers is no longer optional for businesses that prioritize long-term stability. 3EX Hosting bridges the gap between raw power and reliable management. Our infrastructure relies on an N+1 redundant power and cooling architecture to eliminate single points of failure. You get immediate access to a carrier-neutral interconnection hub and 24/7 expert remote hands support. We’ve built a foundation where technical excellence meets human expertise. It’s time to move beyond the limitations of standard hosting and embrace a system designed for the next decade of digital growth. Our team ensures your transition is seamless and your hardware remains secure. Secure Your High-Density Infrastructure with 3EX Hosting. Experience the peace of mind that comes with professional, stable, and superfast infrastructure.

Frequently Asked Questions

What is considered high-density colocation in 2026?

High-density colocation in 2026 refers to rack power requirements that exceed 30kW per cabinet. While standard racks previously averaged 5kW to 10kW, modern AI hardware like NVIDIA H200 clusters now demands between 50kW and 100kW per unit. Providers must deliver specialized power distribution and cooling to sustain these loads without thermal throttling. It’s the technical baseline for any enterprise running large scale machine learning models.

Can legacy data centers support high-density AI workloads?

Most legacy data centers built before 2015 can’t support high-density AI workloads because they lack the necessary power infrastructure and floor reinforcement. These older facilities typically top out at 10kW per rack and rely on raised floor air cooling. Attempting to run 40kW racks in these environments causes immediate hot spots and power failures. You’ll need facilities designed with 480V power distribution and reinforced concrete slabs.

How do high-density colocation providers manage heat?

High-density colocation providers manage heat using a combination of Rear Door Heat Exchangers and Direct-to-Chip liquid cooling. These systems remove up to 90% of heat before it ever enters the room. By utilizing closed-loop water systems, providers maintain stable temperatures for 70kW racks. This approach is 40% more efficient than traditional air units used in standard data centers, ensuring your hardware stays safe.

What are the cost implications of high-density vs. standard colocation?

High-density setups typically carry a 20% to 35% higher monthly recurring cost per rack compared to standard configurations due to specialized infrastructure requirements. However, you’ll see a lower total cost of ownership because you’re consolidating hardware into a smaller physical footprint. By packing 60kW into one rack instead of six 10kW racks, you reduce your overall square footage and cross-connect fees significantly.

Why is carrier neutrality important for high-density providers?

Carrier neutrality is vital for high-density colocation providers because it prevents vendor lock-in and ensures ultra-low latency for data-heavy AI applications. Facilities with 10 or more on-site carriers allow you to optimize routing for speed and cost. This flexibility is essential when moving petabytes of data between edge devices and your core. It keeps your operations agile, resilient, and super-fast during peak traffic periods.

What should I look for in a high-density SLA?

You should look for a 100% uptime guarantee on power and a specific Thermal Management SLA that defines tight temperature ranges. In a high-density environment, even a 5-minute cooling failure can lead to immediate hardware damage. Ensure the agreement includes 15-minute response times for critical incidents and clear compensation clauses. This level of accountability is the standard for top-tier providers in 2026.

How does floor load capacity affect high-density rack deployment?

Floor load capacity limits how much heavy AI hardware you can pack into a single rack. High-density racks often weigh over 3,000 pounds, requiring floors rated for at least 250 to 350 pounds per square foot. If the facility doesn’t have reinforced slabs, you won’t be able to deploy fully populated GPU clusters safely. Always verify these structural specifications before you sign a long-term contract.

Is liquid cooling mandatory for high-density colocation?

Liquid cooling becomes mandatory once your rack density exceeds 35kW, as air cooling can’t physically move enough heat at that intensity. While some providers use extreme air-flow containment for mid-range loads, liquid cooling is the only stable solution for 100kW cabinets. It’s the most reliable way to ensure your super-fast processors don’t throttle or fail during intensive compute cycles. It’s a requirement for modern performance.

SUPPORT

SUPPORT

3EX United States

3EX United States