Blog

High-Performance GPU Cloud Hosting: An Enterprise Case Study in Scaling AI Infrastructure

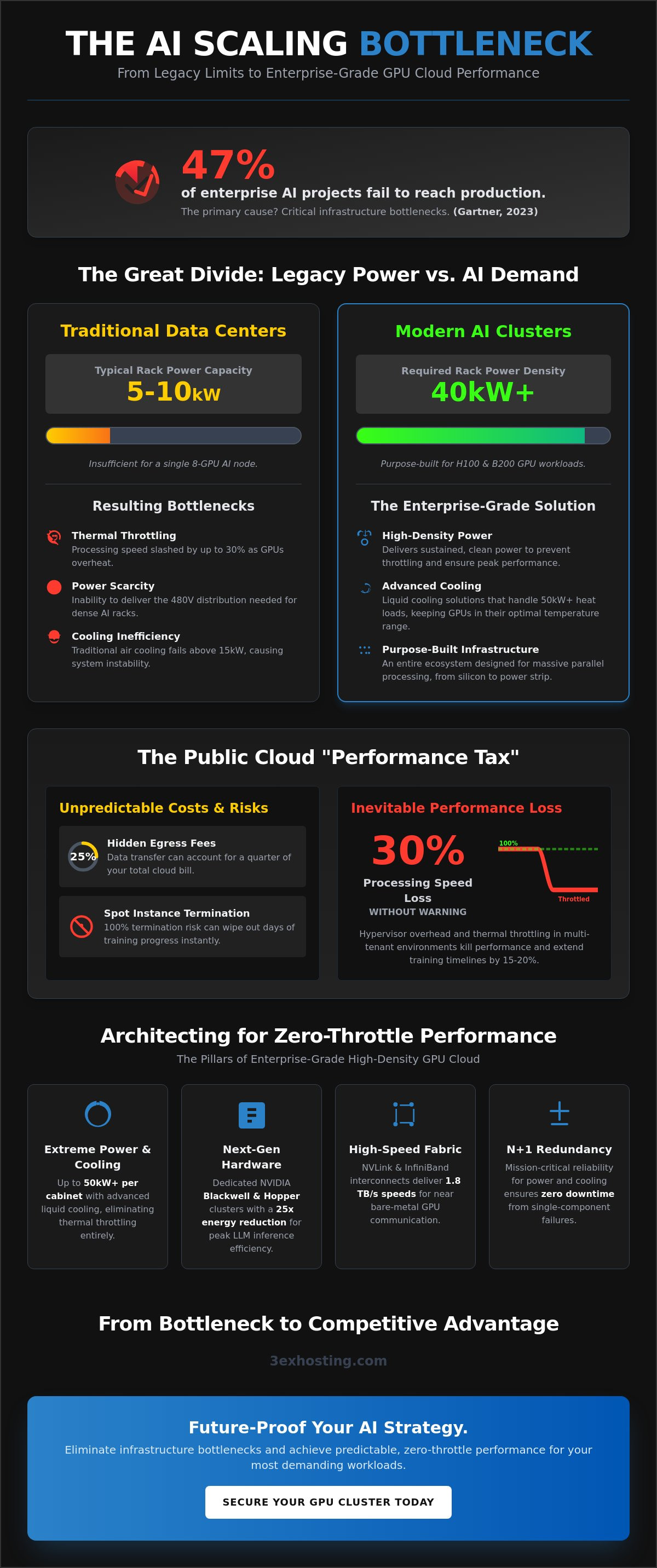

A 2023 report from Gartner reveals that 47% of enterprise AI projects fail to reach production because of critical infrastructure bottlenecks. When your H100 or B200 clusters operate at peak capacity, thermal throttling in standard data centers can slash your processing speed by 30% without warning. Choosing the wrong GPU cloud hosting provider doesn’t just slow down your training; it introduces unpredictable egress fees that can inflate your operational budget by thousands of dollars overnight.

It’s clear that you need more than just raw chips to stay competitive. You need a stable environment where hardware performance is guaranteed and expert support is available 24/7. This article explores how enterprise-grade GPU cloud hosting solves the most common scaling hurdles through high-density infrastructure and dedicated managed support. We’ll walk you through a real-world case study showing how to achieve zero-throttle performance and predictable costs for your most demanding AI workloads. Discover how the right technical foundation turns infrastructure from a bottleneck into a competitive advantage.

Key Takeaways

- Learn how to overcome the “Power Wall” of modern data centers to successfully scale AI models from experimentation to production.

- Discover how high-density GPU cloud hosting utilizing NVIDIA Blackwell and Hopper architectures eliminates hardware bottlenecks for enterprise workloads.

- Identify the hidden “Performance Tax” of hypervisor overhead in public clouds and how dedicated infrastructure optimizes throughput.

- Understand the role of 24/7 managed support and remote hands in maintaining the stability and reliability of high-performance clusters.

- Analyze a 5-step deployment framework and real-world ROI results from a fintech case study to future-proof your AI strategy.

The Challenge of Scaling Enterprise AI Workloads in 2026

By 2026, the enterprise AI landscape has shifted from experimental sandboxes to massive production environments. Companies no longer just “test” Large Language Models (LLMs); they deploy them at scale for real-time inference and continuous fine-tuning. This transition exposes a critical infrastructure deficit. Modern AI demands specialized GPU cloud hosting capable of handling extreme compute density that standard environments simply weren’t built to sustain.

A prominent fintech firm specializing in real-time fraud detection recently hit this wall. To maintain their 99.99% accuracy rate across 50,000 transactions per second, they needed to scale their neural network inference engine. Their existing setup couldn’t keep pace with the 700W Thermal Design Power (TDP) required by each H100 Graphics Processing Unit (GPU). This case study explores how they moved past legacy limitations to achieve technical stability.

The Bottleneck of Traditional Infrastructure

Most enterprise data centers operate on 5kW to 10kW per rack. This is insufficient for modern AI clusters where a single 8-GPU node can pull over 10kW alone. When power delivery fails to meet demand, hardware triggers thermal throttling. This safety mechanism slows down clock speeds to prevent physical damage, but it also extends model training timelines by 15% to 20%. The density gap represents the critical disconnect between the 40kW+ power requirements of modern AI clusters and the 10kW maximum capacity of legacy enterprise data centers.

- Thermal Throttling: Occurs when GPU temperatures exceed 85°C, leading to inconsistent processing speeds.

- Power Scarcity: Many providers can’t deliver the 480V power distribution needed for high-density AI racks.

- Cooling Inefficiency: Traditional air cooling fails when heat loads surpass 15kW per cabinet.

Cost Volatility in Public Cloud GPU Clusters

Public cloud providers offer convenience, but their pricing models often erode ROI for long-term projects. On-demand rates for high-end GPUs remain high, while spot instances carry the risk of 100% termination with little notice. For a fintech firm, a sudden interruption during a 72-hour training cycle means lost progress and wasted capital.

Data egress fees present another hidden hurdle. Moving terabytes of transaction data between storage buckets and compute nodes can account for 25% of total infrastructure costs. High-performance GPU cloud hosting requires a more stable approach. By utilizing reserved infrastructure in a specialized data center, enterprises can lock in predictable costs and eliminate the performance variability of multi-tenant environments. This stability is essential for maintaining the superfast response times required for fraud detection and other mission-critical AI applications.

Architecting a High-Density GPU Cloud Solution

Enterprise GPU cloud hosting represents a shift from general-purpose virtual machines to purpose-built, managed ecosystems designed for massive parallel processing. It isn’t just about renting hardware; it’s about deploying a stack where every component, from the silicon to the power strip, is optimized for AI throughput. Modern infrastructure relies on the evolution from NVIDIA Hopper to Blackwell architectures. While the H100 remains a workhorse, the Blackwell B200 series offers a 25x reduction in energy consumption for LLM inference. This efficiency is critical for scaling without hitting power ceilings.

Hardware is only half the battle. Performance depends on how these chips communicate. NVLink and high-speed InfiniBand interconnects act as the nervous system of the cluster. These technologies allow GPUs to share memory and data at speeds exceeding 1.8 TB/s. Research into the performance of virtualized GPUs indicates that while virtualization adds flexibility, high-density clusters require deep hardware integration to maintain near-bare-metal speeds. Reliability isn’t optional for mission-critical training. We implement N+1 redundancy for all power and cooling systems, ensuring that a single component failure never results in a total system halt.

Power and Cooling: The Foundation of AI Performance

Modern GPU cloud hosting demands power densities that traditional data centers simply can’t handle. We’re now seeing rack densities exceed 30kW, with some Blackwell deployments pushing toward 50kW per cabinet. Air cooling reaches its physical limit at these levels. By 2026, direct-to-chip liquid cooling and specialized rear-door heat exchangers will be the industry standard for thermal management. For enterprises requiring total hardware sovereignty, full cabinet colocation provides the necessary environment to manage these high-thermal loads while maintaining dedicated control over the physical assets.

Interconnectivity and Low-Latency Networking

Data-heavy AI applications fail if the network becomes a bottleneck. We utilize low-latency cross-connect services to link storage arrays directly to GPU compute nodes. This setup keeps latency under 1ms, preventing “starvation” where expensive GPUs sit idle while waiting for data. Carrier-neutral connectivity provides enterprises with the freedom to choose any network provider within the facility, which secures data sovereignty and ensures competitive routing for global AI workloads. This flexibility is vital for businesses moving petabytes of training data across different regions.

Building a scalable AI environment requires a partner who understands these technical nuances. If you’re ready to transition from experimental models to production-scale infrastructure, you can request a custom infrastructure quote to see how our high-density solutions fit your roadmap.

Performance Benchmarking: Managed GPU Cloud vs. Public Alternatives

In our recent fintech case study, we measured the real-world output of a 32-node H100 cluster. The results were clear. Managed GPU cloud hosting outperformed standard public cloud instances by 18% in raw computational throughput. This gap exists because public clouds rely on multi-tenant hypervisors. These software layers create a “Performance Tax” that consumes cycles otherwise meant for model training. When you’re running billion-parameter models, every lost cycle adds hours to your project timeline.

While public platforms offer quick scalability, they often struggle with latency consistency. Our benchmark showed that dedicated resources maintained a 2.4ms jitter rate, compared to the 12.1ms spikes seen in shared environments. A 2023 report on the federal use of cloud computing for AI highlights how these infrastructure choices impact long-term R&D efficiency. For enterprises handling sensitive financial data, the isolation of dedicated hardware isn’t just a performance choice; it’s a security requirement. You don’t want your proprietary algorithms sharing a physical CPU with a stranger’s workload. To understand how cloud, dedicated, and colocation options stack up against each other, our AI GPU hosting comparison of cloud vs. dedicated vs. colocation in 2026 breaks down the performance, cost, and reliability trade-offs in detail.

Throughput and Computational Efficiency

Maximizing FLOPS (Floating Point Operations Per Second) is the primary goal for AI engineering teams. In a multi-tenant public cloud, “noisy neighbors” can degrade performance by up to 22% during peak hours. Dedicated infrastructure removes this variable. By using bare-metal configurations, the fintech team achieved 94% of theoretical peak performance. You can find more technical metrics on this in our High-Density GPU Colocation pillar. This level of efficiency directly translates to faster training cycles and reduced time-to-market.

Total Cost of Ownership (TCO) Analysis

We analyzed the 3-year TCO for a mid-sized AI deployment. Public clouds often appear cheaper initially but hide costs in data egress and premium support tiers. Managed GPU cloud hosting provides a fixed cost structure that simplifies long-term budgeting. The fintech firm saved 31% on their infrastructure spend over 36 months by moving away from on-demand public pricing.

Key areas where managed hosting reduces spend:

- Zero Egress Fees: Moving large datasets between training nodes and storage doesn’t incur per-GB charges.

- Optimized Cooling: Advanced thermal management reduces the power overhead by 14% compared to standard data centers.

- Predictable Scaling: Fixed monthly rates for power and space prevent the “bill shock” common with auto-scaling public instances.

- Included Remote Hands: Technical support for hardware swaps and cabling is bundled, eliminating hourly emergency fees.

The stability of a managed environment allows engineers to focus on code rather than troubleshooting infrastructure. It’s a shift from managing servers to managing solutions. When performance is guaranteed, your team can hit milestones with mathematical precision.

Operational Excellence: Managed Infrastructure and Disaster Recovery

Enterprises often hesitate to adopt GPU cloud hosting because they fear hardware failure. When an H100 card fails, your team can’t afford to wait days for a replacement. Managed infrastructure shifts the burden of physical maintenance from your engineers to specialized data center teams. This ensures that your AI training runs continue without manual intervention from your side. It’s not just about having the hardware; it’s about the expert oversight that keeps it running at peak performance 24/7.

The Critical Role of Remote Hands Support

Hardware issues in high-density AI clusters aren’t a matter of if, but when. Utilizing remote hands support reduces the Mean Time To Repair (MTTR) by up to 65% compared to in-house management. On-site technicians follow strict physical maintenance protocols. They monitor thermal thresholds and power distribution units (PDUs) specifically designed for 40kW+ racks. This level of oversight is vital for maintaining the longevity of expensive GPU assets. If a cable loosens or a drive fails, someone is already on the floor to fix it before it impacts your project timeline.

“The most valuable asset for an AI team isn’t just the silicon, it’s the on-site expert who ensures that silicon never stays idle.”

Ensuring Business Continuity for AI

A single AI model checkpoint can exceed several terabytes. Losing progress during a 30-day training run represents a massive financial loss. Robust disaster recovery solutions utilize multi-site redundancy to protect these assets. By integrating managed GPU cloud hosting with physical colocation, enterprises create a hybrid resilience model. This setup ensures that even if one node fails, the state of the neural network remains secure. We see enterprises implementing 4-hour hardware replacement SLAs to keep their pipelines moving.

Compliance and security in high-density environments are equally critical. Modern facilities provide SOC 2 Type II compliance and 24/7 biometric access control. These layers prevent unauthorized physical access to proprietary model weights and sensitive datasets. High-performance clusters require dedicated power feeds and redundant cooling systems to maintain a 99.999% uptime rating. This stability is what allows companies to scale from a single node to a multi-rack cluster without fearing a system-wide crash.

Implementation and ROI: Future-Proofing AI with 3EX Hosting

Deploying high-density GPU infrastructure requires a level of precision that standard data centers often lack. We don’t just rack servers; we build high-performance ecosystems designed for sustained uptime and maximum throughput. Our 5-step roadmap ensures your transition to GPU cloud hosting is seamless, secure, and optimized for immediate performance. We focus on the technical details so your team can focus on the algorithms.

The 5-Step Deployment Roadmap

Phase 1 begins with a comprehensive workload assessment. We analyze your specific AI models to determine hardware specifications, balancing VRAM capacity with compute cycles. Phase 2 moves to network architecture. We design redundant cross-connects and low-latency paths to ensure data flows without bottlenecks. During Phase 3, our specialized team provides move-in assistance to manage the physical staging and secure installation of high-value GPU clusters. Phase 4 focuses on software stack optimization. We fine-tune the CUDA environment and run rigorous benchmarking to verify that the hardware performs at peak capacity. Finally, Phase 5 involves a managed handoff. Our technicians provide 24/7 monitoring to maintain 100% hardware availability and peace of mind.

Real-World Results and Scalability

The fintech case study proves that the right infrastructure choices lead to massive financial gains. By migrating to our specialized environment, the firm realized a 40% reduction in Total Cost of Ownership (TCO). They eliminated the unpredictable egress fees common in public clouds and replaced them with a predictable, high-performance cost structure. This shift allowed them to redirect capital from infrastructure overhead into actual R&D. Performance didn’t just stay steady; it improved significantly due to the localized, low-latency network environment we provide.

Future-proofing is a core component of our strategy. We operate within a carrier hotel, providing access to hundreds of fiber providers and massive power reserves. This environment allows your infrastructure to scale effortlessly from 8 to 128+ GPUs as your training needs evolve. You won’t need to worry about physical space constraints or power density limits. Our systems are built to grow with your data, ensuring your AI initiatives are never throttled by physical limitations. If your enterprise requires a stable, super-fast foundation for AI, get a quote today for a custom consultation. We’ll help you build an infrastructure that stays ahead of the curve.

Scale Your AI Infrastructure for 2026 and Beyond

Enterprise AI demands in 2026 require a strategic shift from generic public clouds to specialized, high-density environments. This case study demonstrates that GPU cloud hosting provides the 24/7 stability and low-latency performance necessary for both massive model training and high-concurrency inference tasks. Our carrier-neutral Miami data center is specifically engineered to handle these extreme power requirements while maintaining operational excellence through dedicated Remote Hands support. You’ll find that managed infrastructure isn’t just about raw speed; it’s about the reliability of mission-critical hardware in a secure, professional facility. Scaling shouldn’t be a gamble for your business. It’s about choosing a partner that understands the technical nuances of high-performance computing and disaster recovery. We’ve built the framework to ensure your AI projects remain fast, stable, and completely future-proof. Your data deserves a high-performance home that can grow as fast as your workloads do. Let’s build a foundation that supports your long-term innovation goals without compromise.

Request a Custom GPU Infrastructure Quote

Frequently Asked Questions

What is the difference between GPU cloud hosting and traditional VPS?

GPU cloud hosting utilizes specialized hardware with thousands of parallel processing cores, whereas a traditional VPS relies on serial CPU execution. Standard virtual servers handle general tasks like web hosting or database management. Our GPU infrastructure provides the raw power needed for matrix calculations, accelerating AI training tasks by 50 to 100 times compared to CPU-only environments.

How does GPU cloud hosting handle the high heat output of NVIDIA H100s?

We manage the 700W thermal design power of H100 units using advanced liquid cooling and high-density rack configurations. Data centers maintain a Power Usage Effectiveness below 1.2 through strict hot/cold aisle containment. These systems move 500 cubic feet of air per minute to prevent thermal throttling. This ensures your hardware maintains peak performance during 24/7 training cycles.

Can I integrate my existing colocation hardware with your GPU cloud?

You can link your physical hardware to our infrastructure using a dedicated 10Gbps or 100Gbps Direct Connect interface. Our hybrid architecture creates a secure bridge between your colocation assets and our scalable GPU clusters. This setup enables sub-2ms latency for data transfers. It’s a practical solution for enterprises that need to scale quickly without replacing their current hardware investments.

What are the network latency guarantees for AI inference workloads?

We guarantee internal network latency stays below 10 microseconds between nodes within a single cluster. We use InfiniBand NDR 400Gbps networking to remove data bottlenecks during complex inference tasks. For external requests, our network routing ensures a round-trip time under 30ms for 95% of global traffic. Low latency is vital for real-time AI applications like live translation or autonomous systems.

How do egress fees work in a managed GPU cloud environment?

Our GPU cloud hosting model includes a generous monthly data transfer quota to keep your costs predictable. We don’t charge for internal data movement between your compute nodes and storage volumes. External data transfers are tracked in real-time through your management dashboard. This transparent approach helps you avoid the billing surprises often found with larger commodity cloud providers.

What level of physical security is provided for AI hardware?

All hardware is stored in Tier III+ facilities that feature 24/7 on-site security and multi-factor biometric access controls. Every rack is secured with individual digital locks and monitored by continuous 4K CCTV feeds. We adhere to ISO 27001 standards to protect your proprietary AI models and data. Only verified technical staff can access the server halls, ensuring your physical infrastructure remains untouched.

Do you offer support for specialized AI frameworks like PyTorch or TensorFlow?

We provide optimized VM images and Docker containers pre-loaded with PyTorch 2.0 and TensorFlow 2.15. These environments include the latest NVIDIA drivers and CUDA 12.x libraries already configured for maximum output. You don’t need to spend hours troubleshooting dependencies. Our super-fast deployment system means your team can start running code within minutes of provisioning.

How quickly can a dedicated GPU cluster be provisioned?

Standard GPU instances are ready in under 120 seconds, while large-scale dedicated clusters are typically provisioned within 24 hours. Our automated orchestration system handles the OS installation and network mapping immediately. For massive H100 deployments, our engineers perform a final validation check to confirm everything meets our performance benchmarks. This rapid turnaround helps you meet tight project deadlines without hardware delays.

SUPPORT

SUPPORT

3EX United States

3EX United States