Blog

Data Center Power and Cooling Costs: The 2026 Enterprise TCO Guide

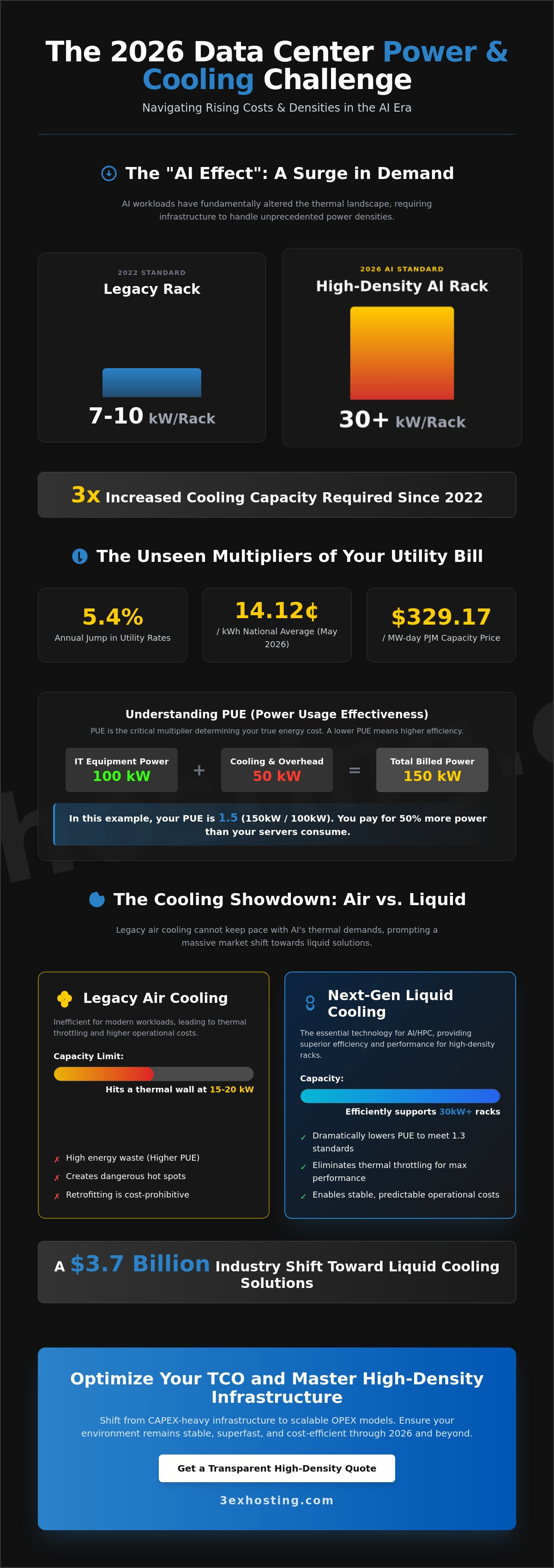

Can your infrastructure survive a 5.4% annual jump in utility rates while rack densities continue to climb? With the national average commercial electricity rate reaching 14.12¢/kWh in May 2026, managing data center power and cooling costs is no longer just an operational task; it’s a survival strategy. You’ve likely noticed that legacy air cooling can’t keep up with modern AI workloads, leading to dangerous thermal throttling and unpredictable bills. It’s frustrating to watch PJM capacity market prices hit $329.17/MW-day while trying to maintain a stable, high-performance environment.

We’ve built this guide to help you master these complexities with a detailed breakdown of power density and next-generation cooling technologies. You’ll gain a clear framework for calculating Total Cost of Ownership (TCO) that accounts for the $3.7 billion shift toward liquid cooling solutions. We’ll compare air versus liquid efficiency and provide actionable steps to lower your PUE to meet the new 1.3 regulatory standards. By the end of this article, you’ll have the technical roadmap needed to ensure your infrastructure remains stable, superfast, and cost-efficient through 2026 and beyond.

Key Takeaways

- Understand how the “AI Effect” tripled cooling requirements since 2022 and adapt your rack density planning for 2026.

- Calculate data center power and cooling costs accurately by mastering the mathematical relationship between PUE and your utility billing.

- Compare traditional air cooling with liquid systems to determine which technology provides the best ROI for your high-density hardware.

- Identify hidden hot spots and power chain inefficiencies through thermal audits and real-time monitoring with Intelligent PDUs.

- Optimize your TCO by shifting from CAPEX-heavy infrastructure to the scalable OPEX models offered by modern colocation environments.

The 2026 Data Center Landscape: Understanding Power and Cooling Dynamics

Managing data center power and cooling costs in 2026 requires looking far beyond the monthly utility bill. Power density, measured in kilowatts (kW) per rack, now dictates the majority of your operational expenditure. As enterprises scale their digital footprints, the cost of supporting high-performance hardware grows exponentially rather than linearly. Power Usage Effectiveness (PUE) remains the gold standard for efficiency in 2026, acting as a critical multiplier that determines how much of your total energy spend actually powers your servers versus supporting systems.

Many IT managers focus solely on the metered utility rate. This is a strategic mistake. True Total Cost of Ownership (TCO) includes the amortization of cooling equipment, maintenance of redundant power systems, and the efficiency of the underlying data center infrastructure. In 2026, hidden costs like water consumption for cooling and regional carbon offset requirements are becoming standard line items in enterprise budgets. If you aren’t accounting for these, your projections will fail.

The “AI Effect” has fundamentally altered the thermal landscape. Workloads today require three times the cooling capacity compared to 2022 standards. While a standard rack in 2022 might have drawn 7-10 kW, modern AI-driven environments often exceed 30 kW per rack. This density creates intense heat pockets that traditional cooling methods simply can’t dissipate without massive energy waste.

The Shift from Low to High-Density Infrastructure

Legacy facilities often face physical limitations when trying to support 30kW+ racks. Traditional raised-floor air cooling systems hit a thermal wall at approximately 15-20 kW per rack. Beyond this point, the volume of air required for heat removal exceeds the physical space available in the floor plenum. Retrofitting these older spaces is often more expensive than migrating to purpose-built high-density environments. Enterprises staying in legacy sites face higher risks of hardware failure due to thermal stress and unpredictable data center power and cooling costs.

Projecting Energy Demands for AI and HPC

Modern GPU clusters in 2026 draw massive amounts of power, with high-end chips peaking at over 2,000 watts each. High-performance computing (HPC) changes the cooling-to-power ratio because the hardware generates heat more densely than generic CPU-based servers. You can explore these specific requirements in our guide to high-density GPU colocation. Efficiently managing these demands requires a shift toward liquid cooling or advanced containment strategies to prevent operational costs from spiraling out of control.

Calculating Power Costs: Consumption, Density, and Utility Rates

Accurately projecting data center power and cooling costs begins with the raw utility rate. As of May 2026, the national average commercial electricity rate has climbed to 14.12¢/kWh, representing a 5.4% year-over-year increase. This baseline rate is only the starting point. To find your true expense, you must apply your facility’s Power Usage Effectiveness (PUE) as a multiplier. If your servers draw 100kW and your PUE is 1.5, you are actually paying for 150kW of power. This efficiency gap directly dictates data center operating costs and can turn a profitable deployment into a financial burden if left unmonitored.

Beyond simple consumption, enterprises must account for power factor correction. Inefficient power supplies create reactive power that doesn’t perform work but still strains the electrical system. Many utility providers now charge penalties for low power factors, which can quietly inflate your bill by 2% to 5%. Modern, high-quality hardware typically maintains a high power factor, but legacy equipment often requires active correction to avoid these surcharges. If you are unsure how your current setup scales, you can request a custom quote to see a transparent breakdown of metered power options.

Metered Power vs. Flat-Rate Racks

Metered power is the most transparent billing model for variable enterprise workloads. You pay for the exact kilowatt-hours consumed, which incentivizes energy-saving configurations. Conversely, “all-inclusive” or flat-rate models often hide facility inefficiencies. While they offer a predictable monthly bill, you’re frequently paying for a capacity buffer you never actually use. For businesses with steady growth, full cabinet colocation with metered billing provides the most granular control over scaling expenses without overpaying for idle resources.

The Financial Impact of Power Redundancy

Redundancy is essential for stability, but it comes with a price tag. A 2N infrastructure provides the highest level of security by doubling every component, yet it essentially requires you to pay for twice the capacity while half remains idle. With PJM capacity market prices reaching $329.17/MW-day for the 2026/2027 period, the cost of holding that “just in case” capacity is significant. Most modern enterprises find that N+1 redundancy offers the ideal balance. It provides the necessary safety net for maintenance and hardware failure without the extreme overhead of a fully mirrored 2N system.

The Escalating Cost of Cooling: Air vs. Liquid Infrastructure

Cooling is the primary variable that separates a cost-efficient facility from a financial drain. Traditional Computer Room Air Conditioning (CRAC) and Computer Room Air Handler (CRAH) systems are hitting their physical limits in 2026. These systems rely on moving massive volumes of chilled air through floor plenums, a method that becomes cost-prohibitive as rack densities exceed 15 kW. While air-cooled facilities typically maintain a PUE of 1.5 or higher, modern liquid-cooled environments often achieve a PUE of less than 1.2, directly slashing data center power and cooling costs by reducing the energy overhead required for thermal management.

The capital investment required for these technologies varies significantly. Direct-to-chip cooling systems currently carry a CAPEX premium of approximately $2,500 to $4,500 per kW compared to traditional air cooling. However, for enterprises running high-density hardware, this upfront cost is often offset by the reduction in annual operational expenses. Choosing the right cooling architecture is a balance between your current hardware requirements and your three-year growth projections.

Aisle Containment: Maximizing Existing Air Systems

Aisle containment remains the most cost-effective way to optimize air-cooled environments. By physically separating hot exhaust from cold intake air, you eliminate “bypass air” and “re-circulation,” two of the biggest drivers of wasted energy. Cold aisle containment is often easier to retrofit in legacy spaces. Hot aisle containment, while sometimes more complex to install, typically offers a higher ROI by allowing for higher return air temperatures to the cooling units. This optimization reduces fan energy consumption and allows your chillers to operate at higher, more efficient set points, lowering your overall utility spend.

Liquid Cooling: When the Investment Becomes Necessary

Air cooling physically fails once you hit the 35kW per rack threshold. At this density, the heat generated by modern processors is so concentrated that air cannot move fast enough to prevent thermal throttling. For high-performance workloads, integrating a GPU dedicated server requires a cooling strategy that can handle extreme thermal loads without compromising stability. Liquid cooling, whether through direct-to-chip cold plates or single-phase immersion, ensures your hardware maintains peak clock speeds. It’s a technical necessity for AI factories that prioritize data center power and cooling costs over simple square footage metrics.

Operational Audits: Identifying Inefficiencies in Your Power Chain

Thermal audits reveal why your utility bill stays high despite low server utilization. Identifying hot spots is critical, especially since the ASHRAE Fifth Edition guidelines now require a tighter 18°C to 22°C range for high-density Class H1 systems. If your inlet temperatures exceed these bounds, your hardware will throttle, destroying performance and increasing data center power and cooling costs. Use infrared thermography to map your facility. This process exposes airflow short-circuits that simple software monitoring might miss.

Decommissioning “zombie servers” remains the fastest way to reclaim power capacity. Industry reports from early 2026 indicate that up to 25% of physical servers in enterprise environments draw power without performing active compute tasks. These idle units contribute nothing to ROI while steadily inflating your monthly spend. Pair this cleanup with the installation of Intelligent PDUs. These units provide real-time, rack-level consumption data, allowing you to identify specific hardware that violates your efficiency targets.

Physical hygiene in the cabinet is just as important as the hardware inside. Unmanaged cables block exhaust paths, forcing fans to spin faster and consume more energy. Installing blanking panels in every empty “U” space prevents hot exhaust air from recirculating into the cold aisle. These simple, low-cost adjustments can improve cooling efficiency by 10% to 15% without requiring expensive equipment upgrades.

Real-Time Monitoring and DCIM Tools

Modern Data Center Infrastructure Management (DCIM) tools now use predictive analytics to forecast power surges. This is vital for avoiding peak-demand penalties, which have become more aggressive in 2026. By integrating your DCIM with an automated Building Management System (BMS), you can adjust cooling outputs in real-time based on actual compute load rather than static schedules. This ensures your cooling scales exactly with your needs.

The “Remote Hands” Advantage in Efficiency

Software alone cannot fix a misaligned floor tile or a loose containment curtain. Professional remote hands support provides the human oversight needed to maintain a high-efficiency environment. Outsourcing physical infrastructure auditing is often more cost-effective than keeping specialized in-house staff. These experts standardize cabinet layouts to ensure maximum thermal performance, keeping your systems stable and your overhead predictable. If you’re ready to optimize your environment, contact us for a professional audit quote to see how we can streamline your operations.

Optimizing TCO Through High-Density Colocation Solutions

Building and maintaining a private on-premise facility in 2026 is a massive financial risk for most enterprises. The average global cost to build a data center has reached $11.30 million per MW, a figure that includes the specialized cooling and power distribution systems required for modern hardware. Shifting from a CAPEX-heavy model to the OPEX-driven structure of colocation allows you to allocate capital toward core business growth rather than depreciating infrastructure. By leveraging a high-density provider, you gain immediate access to institutional-grade cooling technologies without the multi-million dollar upfront investment.

The “Carrier Hotel” model offers a distinct advantage through economies of scale. Large-scale colocation providers negotiate power rates at a volume that individual enterprises simply can’t match. This scale also allows for more efficient management of data center power and cooling costs, as the facility can distribute the energy overhead of massive cooling plants across thousands of racks. 3EX Hosting delivers this enterprise-grade efficiency by providing the technical stability and superfast connectivity your mission-critical loads require, ensuring your TCO remains predictable even as utility rates fluctuate.

Customization is another key factor in optimizing your total spend. Whether you require custom cages or private suites, you can tailor the power and cooling delivery to your specific security and density needs. This modular approach prevents you from paying for cooling capacity you don’t use, while still providing the headroom necessary for sudden compute spikes. It’s a balanced strategy that prioritizes reliability and cost control simultaneously.

The Economics of Full Cabinet Colocation

Choosing full cabinet colocation provides a level of cost predictability that on-premise management can’t provide. You avoid the volatility of managing your own chillers and UPS systems, shifting those maintenance risks to the provider. You also gain direct access to high-performance cross-connects, eliminating the need for expensive additional networking infrastructure. For businesses planning for scalable enterprise growth, our cage solutions datacenter offers the physical security and power density needed to support 2026 workloads efficiently.

Securing Your Infrastructure for the AI Era

Your infrastructure must be ready for the hardware of tomorrow. As GPU clusters become standard, your provider needs the power headroom to support 30kW+ racks without compromising the cooling of adjacent equipment. Redundancy shouldn’t just be a checkbox; it’s a business continuity pillar that protects you from thermal failure. 3EX Hosting ensures your systems stay stable through the most demanding cycles. When you’re ready to transition to a more efficient model, get a custom quote for high-density colocation to see how we can lower your operational overhead.

Securing Your Infrastructure Efficiency for the 2026 Compute Era

The path to stabilizing your data center power and cooling costs depends on your ability to adapt to high-density requirements. With commercial electricity rates sitting at 14.12¢/kWh as of May 2026, every point of PUE efficiency directly impacts your bottom line. You’ve seen how transitioning to liquid cooling or optimizing air containment prevents thermal throttling in 30kW+ environments. Maintaining this level of performance requires constant vigilance through real-time monitoring and professional oversight.

Don’t let legacy infrastructure or inefficient cooling cycles drain your technical budget. We provide the stability your business needs to scale safely and predictably. Our carrier-neutral facility offers dedicated high-density GPU support and enterprise-grade N+1 power redundancy to ensure your mission-critical loads never falter. With 24/7 Remote Hands on-site, we manage the physical complexities and thermal audits so you can focus on growth.

Optimize your infrastructure costs with a custom quote from 3EX Hosting and secure a stable, high-performance foundation for your enterprise. Your digital assets deserve a modern environment that combines technical excellence with absolute reliability.

Frequently Asked Questions

What is the average cost of power for a data center rack in 2026?

Average costs depend on the rack’s power draw and the local utility rate, which reached 14.12¢/kWh in May 2026. For a standard 10kW rack operating at a PUE of 1.5, you’ll consume roughly 10,800 kWh per month. This calculation accounts for the 5.4% annual increase in electricity prices recorded this year. Regional factors like the PJM capacity market price of $329.17/MW-day also influence the final monthly bill for enterprise users.

How much does it cost to cool a high-density AI server rack?

Cooling a high-density AI rack often requires liquid-based infrastructure due to the 35kW thermal threshold where air systems fail. While the capital expenditure for direct-to-chip systems is $2,500 to $4,500 per kW higher than air, the operational data center power and cooling costs are lower due to higher efficiency. AI factories specifically built for these loads use specialized cooling to prevent thermal throttling on high-end GPU clusters.

Is liquid cooling more expensive to operate than air cooling?

Liquid cooling typically has lower operational costs despite its higher initial investment. Single-phase immersion cooling offers a 20% lower 10-year Total Cost of Ownership compared to traditional air cooling with rear-door heat exchangers. Because liquid systems achieve PUE ratings below 1.2, they consume significantly less energy to dissipate the same amount of heat. This makes them the superior financial choice for high-density deployments over 30kW per rack.

What is a good PUE rating for a modern data center?

A PUE rating of 1.3 or lower is considered the benchmark for efficiency in 2026. New regulations, such as the German Energy Efficiency Act amendment effective July 1, 2026, explicitly mandate this limit for new facilities. While existing data centers have until 2027 to reach 1.6, top-tier providers already target sub-1.2 ratings using liquid cooling. Lower PUE directly translates to lower overhead on your monthly utility expenses.

How can I reduce my data center cooling costs without new hardware?

You can lower cooling expenses by improving airflow management and decommissioning idle “zombie” servers. Installing blanking panels and standardizing cable layouts can improve thermal efficiency by 15% without hardware upgrades. Professional thermal audits using infrared thermography help identify hot spots where chilled air is wasted. These operational adjustments ensure that your existing cooling plant doesn’t work harder than necessary to maintain safe inlet temperatures.

What happens to cooling costs if I switch to 3-phase power?

Switching to 3-phase power reduces electrical line losses and improves the efficiency of your power distribution. It allows for higher power density in a smaller footprint, which is essential for supporting modern AI hardware. While it doesn’t cool the servers directly, it provides a more stable electrical foundation that supports high-efficiency power supplies. This stability helps maintain a better power factor, avoiding utility penalties that inflate your operational spend.

Can high-density colocation help lower my enterprise carbon footprint?

High-density colocation significantly reduces your carbon footprint by centralizing workloads in facilities with ultra-low PUE ratings. Professional providers use large-scale industrial cooling plants that are much more efficient than small, on-premise server rooms. By sharing these optimized resources, your enterprise benefits from a lower energy-per-compute ratio. Many modern facilities also integrate renewable energy sources and carbon offset programs to meet 2026 regulatory sustainability standards.

Are there hidden costs in metered power colocation agreements?

Hidden costs often include reactive power penalties and capacity market surcharges. If your hardware has a low power factor, utilities may apply surcharges for the strain placed on the grid. Additionally, the PJM capacity market price reached $329.17/MW-day for the 2026 delivery year, a cost often passed through to customers. Always review your agreement for “pass-through” clauses that might expose you to regional utility price fluctuations or infrastructure maintenance fees.

SUPPORT

SUPPORT

3EX United States

3EX United States