Blog

The Future of GPU Server Hosting: High-Density Trends and Infrastructure in 2026

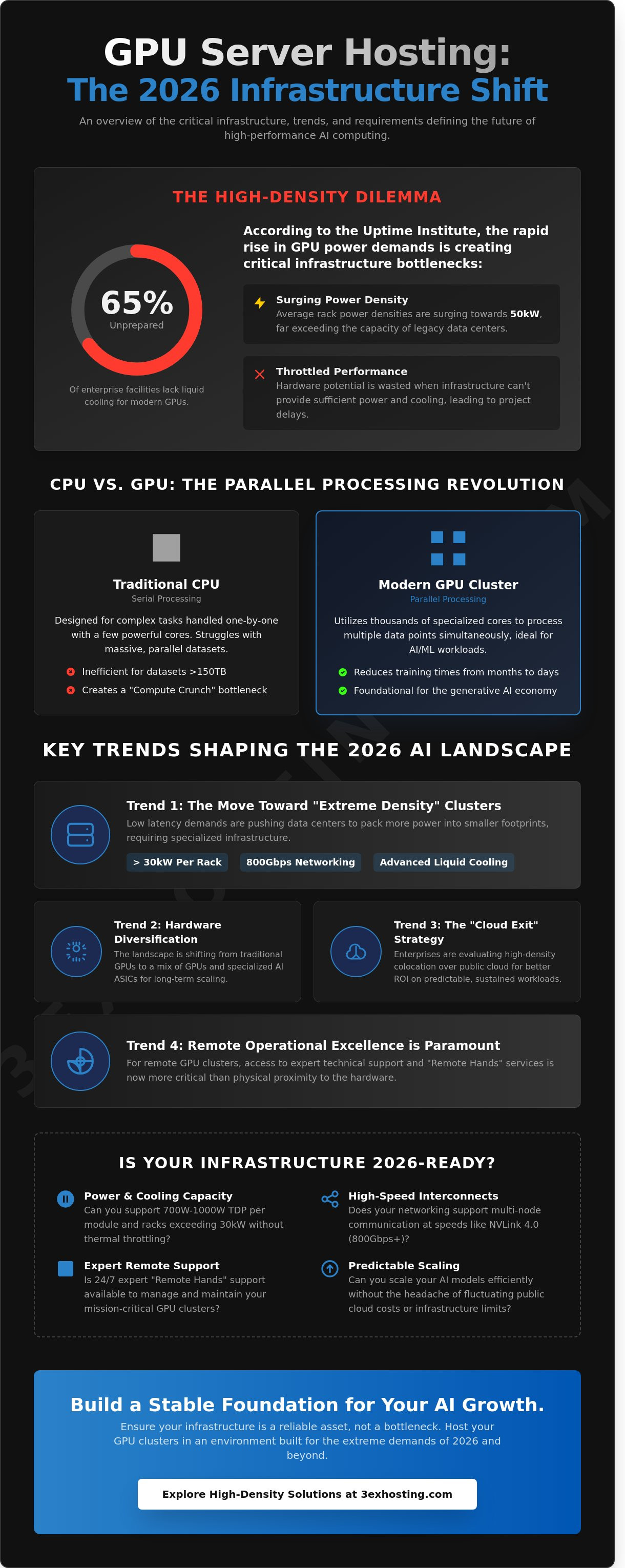

According to the Uptime Institute’s 2024 Global Data Center Survey, average rack power densities are now surging toward 50kW, yet 65% of existing enterprise facilities aren’t equipped with the liquid cooling required for modern GPU server hosting. You’ve likely seen how scaling AI projects quickly turns into a battle against physical infrastructure limits and unpredictable monthly bills. It’s frustrating when your hardware’s potential is throttled by a data center that can’t provide enough power or by public cloud costs that fluctuate without warning.

You’ll discover the critical infrastructure shifts and technological trends defining high-performance computing as we head into 2026. We’ll provide a clear framework to help you choose between colocation and cloud models while detailing the superfast networking and cooling requirements necessary for scaling. This guide outlines exactly how to build a stable foundation that keeps your AI models running efficiently without the typical headaches of remote hardware management. Our goal is to ensure your infrastructure remains a reliable asset rather than a bottleneck for growth.

Key Takeaways

- Understand how GPU server hosting is evolving from a specialized niche into the foundational engine for enterprise AI and high-performance parallel processing.

- Learn why modern AI workloads demand “Extreme Density” infrastructure and how to identify data centers equipped to manage power consumption exceeding 30kW per rack.

- Discover the shift toward specialized AI ASICs alongside traditional GPUs and how this hardware diversification impacts your long-term scaling strategy.

- Evaluate the “Cloud Exit” trend to determine whether a managed cloud model or a high-density colocation strategy offers better ROI for your predictable workloads.

- Identify the critical operational requirements for remote GPU clusters, where expert technical support and “Remote Hands” outweigh the need for physical proximity.

The Evolution of GPU Server Hosting in 2026

GPU server hosting provides high-performance computing resources specialized for massive parallel processing. By 2026, these systems have transitioned from niche graphics accelerators into the backbone of enterprise IT infrastructure. We’ve seen a shift from general-purpose hardware to specialized clusters designed specifically for AI. These 2026 standards focus on massive data throughput and HBM3e memory bandwidth to keep up with model complexity. Modern enterprises don’t just use GPUs for rendering; they use them to power every facet of their predictive and generative operations.

The transition toward AI-optimized hardware clusters has been driven by the need for efficiency. Standard hosting environments often struggle with the thermal and power demands of 2026-era chips. Professional GPU server hosting now requires specialized power delivery and cooling systems that can handle 700W to 1000W TDP per module. This evolution ensures that businesses can scale their operations without hitting the hardware bottlenecks that limited previous generations of data centers.

Why Parallel Processing Defines Modern Enterprise IT

Traditional CPUs are designed for serial processing, handling complex tasks one by one with a handful of powerful cores. This architecture fails when faced with the “Compute Crunch” of 2026, where datasets often exceed 150 terabytes. A modern GPU cluster utilizes thousands of smaller, specialized cores to process multiple data points simultaneously. This parallel approach reduces training times from months to days. GPU hosting serves as the foundational layer for the generative AI economy. It’s the only way to maintain the speed required for real-time data synthesis and complex simulation.

The Surge in AI and Machine Learning Demand

Large Language Models (LLMs) and generative video tools have fundamentally altered hosting requirements. In 2026, the focus has shifted from simple inference to continuous model fine-tuning. This requires sustained, high-load compute cycles that would burn out standard server configurations. Many organizations now rely on AI cloud computing hosting to manage these scalable projects efficiently. GPU server hosting must now support interconnect speeds like NVLink 4.0, which facilitates lightning-fast communication between nodes. This level of connectivity is vital for training models with trillions of parameters. Without this specialized infrastructure, companies face significant latency and increased operational costs during the fine-tuning phase.

The move toward these high-density environments creates a stable platform for innovation. Businesses can deploy complex neural networks with the confidence that their underlying hardware is built for the task. It’s about more than just raw power; it’s about the reliability and thermal management required to keep these systems running at peak performance 24/7.

Key Trends Shaping the 2026 AI Infrastructure Landscape

The AI infrastructure sector is undergoing a massive transformation as we approach 2026. Data centers no longer just house servers; they manage heat and power at unprecedented levels. According to the Deloitte Tech Trends 2026 report, the shift toward purpose-built AI environments is accelerating. This evolution forces a rethink of traditional GPU server hosting models. We’re seeing a transition from general-purpose racks to specialized environments capable of supporting the massive thermal output of modern chips. Efficiency is the new benchmark for success.

The Move Toward High-Density GPU Clusters

Enterprises now prioritize low latency over almost everything else. This demand drives the “Extreme Density” trend. By 2026, standard racks exceeding 30kW will be common. Packing more power into smaller footprints reduces the physical distance between nodes. This is critical for the 800Gbps networking required by Blackwell and Blackwell-Ultra architectures. Managing these intense thermal loads requires specialized high-density colocation solutions that provide advanced liquid cooling loops and reinforced floor loading capacities. It’s about maintaining thermal headroom in a crowded space.

Specialized Hardware for Specialized Tasks

The hardware landscape is diversifying rapidly. While NVIDIA remains dominant, there’s a surge in specialized AI ASICs designed for specific workloads. Training-focused servers still rely on massive H100 or B200 clusters. However, inference-optimized edge nodes are moving closer to the end user. These systems leverage HBM3e memory to achieve the 4.8 TB/s bandwidth necessary for real-time LLM responses. Many large-scale enterprises are now deploying custom silicon within private data center suites to maintain total control over their proprietary models. This shift ensures that hardware is tuned exactly to the software’s requirements.

Sustainability mandates aren’t optional anymore. New regulations in 2025 and 2026 require data centers to hit strict Power Usage Effectiveness (PUE) targets. This pressure drives the decentralization of GPU power. Massive hubs in traditional markets are being supplemented by regional enterprise clusters. These smaller, distributed sites often have better access to green energy grids. They provide the stability and speed required for localized GPU server hosting without the massive carbon footprint of a single mega-facility. Smart operators are already optimizing for this distributed future to ensure long-term reliability. If you’re planning a deployment, you can request a technical consultation to see how these trends affect your specific hardware requirements.

- Extreme Density: Racks are moving from 15kW to over 30kW to support Blackwell chips.

- Custom Silicon: Growth in proprietary ASICs for inference reduces reliance on general GPUs.

- Regional Shifts: Moving compute closer to data sources to lower latency and energy costs.

- Green Mandates: 2026 regulations will penalize facilities that don’t meet strict efficiency standards.

The Critical Role of High-Density Data Center Infrastructure

Standard data centers are failing the AI test. Most facilities built before 2020 were designed for 4kW to 6kW per cabinet, yet a single modern H100 or B200 cluster can pull 40kW alone. If you try to run high-performance GPU server hosting in a legacy environment, you’ll face thermal throttling or immediate power trips. It’s a physical limitation of the infrastructure. Most standard facilities simply weren’t built to move that much heat or deliver that much current to a single floor tile.

Power Density: The New Bottleneck in GPU Hosting

Delivering 20kW to 50kW to a single footprint requires specialized electrical engineering. Traditional flat-rate power models don’t work for these deployments because the variance in load during training cycles is too high. Metered power is the industry standard for these setups. You pay only for what the chips actually pull. The 3EX Hosting data center infrastructure is built specifically for these high-density loads, ensuring your hardware gets steady current without local circuit overloads. This stability is essential for maintaining 100% uptime during intensive compute cycles.

AI training runs can last weeks or months. A single power failure results in massive data loss and wasted compute hours. Mission-critical clusters require N+1 or 2N redundancy at every level. This setup uses two independent power feeds from separate utility grids. Beyond power, physical security is vital. High-value GPU assets often cost over $300,000 per rack. They require dedicated cages, 24/7 surveillance, and biometric access controls to prevent unauthorized physical tampering.

Cooling Innovations: Beyond Standard Airflow

Air cooling is reaching its physical limit for AI workloads. As noted in the 2026 Global Data Center Outlook, the shift toward liquid-to-chip cooling and rear-door heat exchangers is accelerating. Hot aisle and cold aisle containment is now the absolute baseline for GPU server hosting. Without precise airflow management, heat pockets form and degrade silicon over time. Proper cooling efficiency doesn’t just prevent crashes; it extends the lifespan of your GPUs and ensures consistent clock speeds during heavy workloads.

- Rear-door heat exchangers: These remove heat at the source before it enters the room.

- Liquid-to-chip: This method uses coolant plates directly on the GPU for maximum thermal transfer.

- Containment: Physical barriers prevent the mixing of cold intake air and hot exhaust air.

Choosing the right infrastructure is a matter of protecting your investment. High-performance AI clusters demand a level of power and cooling that 90% of current data centers cannot provide. You need a partner that understands the specific requirements of high-density hardware. Our detailed guide on high density GPU colocation for enterprise AI infrastructure provides a technical roadmap for evaluating power specs and liquid cooling architectures to build a reliable foundation for your workloads.

GPU Colocation vs. Managed Cloud: A Strategic Decision Framework

Deciding between public cloud and colocation isn’t just about immediate costs. It’s about how you manage scale and technical debt. Many enterprises are shifting away from the “cloud-first” mantra in a movement known as the Cloud Exit. This trend is particularly visible among firms with predictable, high-volume workloads. For high-density GPU server hosting, the choice between Opex and Capex models defines your long-term ROI and operational agility.

Public cloud providers offer speed, but they charge a significant premium for that convenience. When your GPU clusters run 24/7 for model training, the hourly costs of cloud instances can become astronomical. Colocation allows you to own the asset, giving you better control over the hardware lifecycle and the specific cooling requirements of your chips. Understanding how leading enterprises have navigated these tradeoffs is invaluable; a detailed enterprise case study on GPU cloud hosting and scaling AI infrastructure illustrates how organizations achieve zero-throttle performance and predictable costs for demanding workloads.

Calculating Total Cost of Ownership (TCO)

Cloud instances often seem cheaper on day one because there’s no upfront hardware cost. However, the breakeven point for 24/7 GPU utilization typically occurs within 12 to 14 months. If you plan to run a cluster for a three-year depreciation cycle, colocation is almost always the more economical choice. You must also factor in hidden costs. Public clouds frequently charge high data egress fees when you move large datasets. In a professional data center, you replace these volatile expenses with predictable cross-connect fees and fixed rack rates. For a comprehensive breakdown of how cloud, dedicated, and colocation models compare on performance and total cost, our AI GPU hosting comparison of cloud vs. dedicated vs. colocation in 2026 provides the detailed analysis you need to make an informed decision.

Performance, Latency, and Bare Metal Advantage

Virtualization adds a layer of overhead that can hinder AI performance. In multi-tenant cloud environments, “noisy neighbors” often compete for the same underlying resources. This contention can degrade training times by 12% to 18% compared to bare metal setups. Bare metal hardware ensures you have 100% of the processing power you paid for, which is critical for superfast computation.

High-speed interconnects like InfiniBand or NVLink require specific physical proximity and specialized cabling. You gain the freedom to optimize these layouts when you use cage solutions. This physical control is vital for reducing latency between nodes. For the highest level of enterprise security and customization, private data center suites provide a dedicated environment where you can implement bespoke cooling and security protocols. This setup removes the risks associated with shared infrastructure and gives your team total sovereignty over the stack.

Build your AI future on a stable, high-performance foundation. Get a custom quote for your GPU cluster today.

Implementation and Operational Excellence for GPU Clusters

Physical distance from your hardware shouldn’t limit your AI capabilities. When a data center provides professional support, your team stays focused on model architecture while the facility manages the physical layer. Shipping a single rack of modern AI hardware can represent an investment of $2.5 million to $4 million. These units often weigh over 2,000 pounds and require white-glove handling at the loading dock. 3EX Hosting acts as your primary operational partner for these national enterprise deployments, managing the logistics of receipt, unboxing, and initial power-up without requiring your staff to be on-site.

Efficient GPU server hosting relies on a facility’s ability to handle extreme power densities. Industry data shows rack requirements have jumped from 10kW to over 60kW in just 24 months. Managing this transition requires a partner who understands the technical nuances of high-performance computing (HPC) environments. By offloading the physical management, you eliminate the need for local engineering staff and reduce operational overhead while maintaining total control over your stack.

The Necessity of 24/7 Remote Hands Support

Complex clusters require more than just power and cooling. They need immediate human intervention when hardware sensors trigger alerts. Our remote hands teams specialize in the delicate tasks required for AI infrastructure. This includes managing high-speed InfiniBand cabling where a single improper bend can cause packet loss and latency spikes. Technicians provide “eyes on glass” support to verify boot sequences or swap failed power modules within minutes. Following the best practices in our Remote Hands Support guide ensures that every interaction with your hardware is documented and performed to enterprise standards. This proactive approach prevents small component failures from cascading into cluster-wide outages that could cost thousands in lost compute time.

Security and Compliance for AI Data Assets

AI training sets often contain a company’s most sensitive proprietary data. Protecting this intellectual property is just as important as cooling the chips. We maintain strict adherence to SOC2, HIPAA, and PCI compliance frameworks to ensure your data remains secure at the physical level. This includes biometric access controls, constant video surveillance, and caged environments that prevent unauthorized physical contact with your nodes. Security also extends to power stability. A single power fluctuation can ruin a training job that has been running for 500 hours, resulting in significant setbacks. If you’re ready to deploy, get a custom quote to see how our high-density infrastructure supports your specific GPU requirements. 3EX Hosting delivers the stable, superfast environment your enterprise needs to lead in the AI space.

Securing Your Lead in the 2026 AI Landscape

The technical demands of 2026 require more than just standard hardware. Modern AI workloads now necessitate high-density power configurations exceeding 20kW per rack to manage the 1,000-watt TDP of next-generation silicon. Scaling your GPU server hosting within a carrier-neutral facility ensures you have the high-speed cross-connects needed for ultra-low latency. You shouldn’t compromise on infrastructure that can’t handle these specific thermal and electrical loads. Reliable operations also depend on 24/7 on-site Remote Hands expert support to maintain cluster stability during intense training cycles. 3EX Hosting provides this specialized technical foundation, combining speed with professional reliability. By moving your clusters into a high density GPU colocation environment today, you’ll guarantee the performance and security your business requires for long-term growth. We’re ready to help you build a stable, high-performance future.

Request a Custom GPU Infrastructure Quote

Frequently Asked Questions

What is the difference between a standard server and a GPU server?

Standard servers rely on Central Processing Units for serial tasks, while GPU servers utilize thousands of cores for massive parallel processing. An NVIDIA H100 GPU contains 80 billion transistors, making it roughly 20 times faster than a standard CPU for complex matrix operations. This specialized architecture allows your infrastructure to handle intensive AI workloads that would crash a traditional server within seconds.

How much power does a typical GPU server rack require in 2026?

A typical high-density rack will require between 100kW and 150kW of power by 2026. This represents a 400% increase compared to the 25kW limits found in standard data center facilities just three years ago. You’ll need specialized power distribution units and heavy-duty electrical feeds to manage these super-fast energy requirements safely without risking a total system shutdown or hardware damage.

Is it better to rent GPU cloud instances or colocate my own hardware?

Renting cloud instances is ideal for projects lasting under 6 months, but colocation saves money for longer commitments. Colocation typically reduces total cost of ownership by 45% for steady-state AI training and large-scale deployments. If your hardware runs at 80% utilization or higher, owning the gear and placing it in a professional facility is the most stable financial choice for your business.

What cooling methods are best for high-density GPU hosting?

Direct-to-chip liquid cooling is the most effective method for managing heat in high-density setups. It removes approximately 90% of heat directly from the processors, which is essential for modern GPU server hosting. Traditional air cooling systems usually fail once a rack exceeds 30kW, leading to performance drops and permanent hardware failure. Liquid cooling ensures your components stay at optimal temperatures during peak loads.

Can I use remote hands to manage my GPU hardware if I am not on-site?

You can use 24/7 remote hands services to manage your hardware without being physically present at the facility. Certified technicians perform physical tasks like swapping NVMe drives, re-cabling ports, or performing hardware resets within a 20-minute response window. It’s a reliable way to maintain high uptime for your clusters while you focus on your core AI development from any location worldwide.

What are the main security risks associated with GPU server hosting?

Data exfiltration and side-channel attacks are the most significant threats to shared hardware environments. Industry research shows that 85% of AI-focused cyberattacks target the training data or the model weights themselves. Secure GPU server hosting mitigates these risks through physical isolation, strict access logs, and hardware-based encryption like Trusted Execution Environments to keep your intellectual property safe from unauthorized access.

How does GPU hosting impact AI training times compared to CPUs?

GPUs accelerate AI training by up to 100 times compared to standard CPU configurations because they handle thousands of simultaneous threads. A complex task that takes 30 days on a high-end CPU cluster often finishes in less than 8 hours on a modern GPU-accelerated cluster. This massive speed advantage is vital for staying competitive and iterating on models in rapidly evolving technology markets.

What should I look for in a data center for AI infrastructure?

Prioritize facilities with a Power Usage Effectiveness rating below 1.2 and built-in support for liquid-cooled manifolds. You need a provider that offers super-fast networking, redundant power feeds, and at least 100Gbps uplinks for each rack. Ensure the data center has a Tier III certification to guarantee 99.982% annual uptime, which protects your AI infrastructure from costly and disruptive power interruptions.

SUPPORT

SUPPORT

3EX United States

3EX United States